Autonomous Weapons: The Ethical Implications of AI in Warfare

10 May 2026

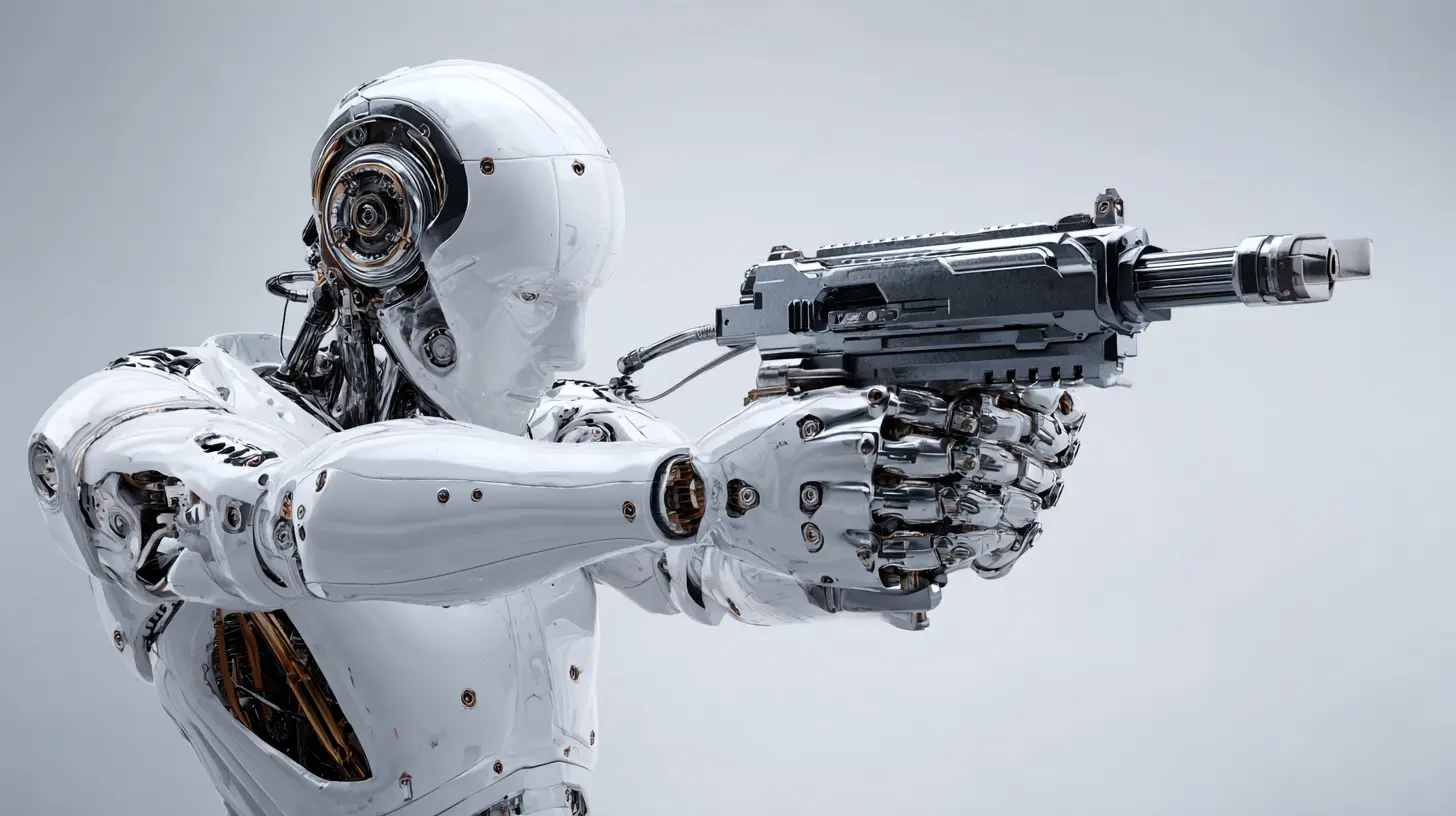

Alright, let’s talk about something that sounds straight out of a sci-fi movie — autonomous weapons. Yep, we’re diving into the fascinating, jaw-dropping, and slightly scary world of AI-driven warfare. Imagine robots making life-or-death decisions without a human pressing the button. Sounds cool but creepy, right?

This isn’t just a concept anymore. It’s real. Countries around the world are developing weapons that can identify, track, and eliminate targets all on their own. While they may promise speed and precision, we can’t ignore the ticking ethical time bomb that comes with them.

So buckle up — we’re about to unpack the tech, twist through the moral maze, and chat about why this topic matters more than ever.

What Are Autonomous Weapons, Anyway?

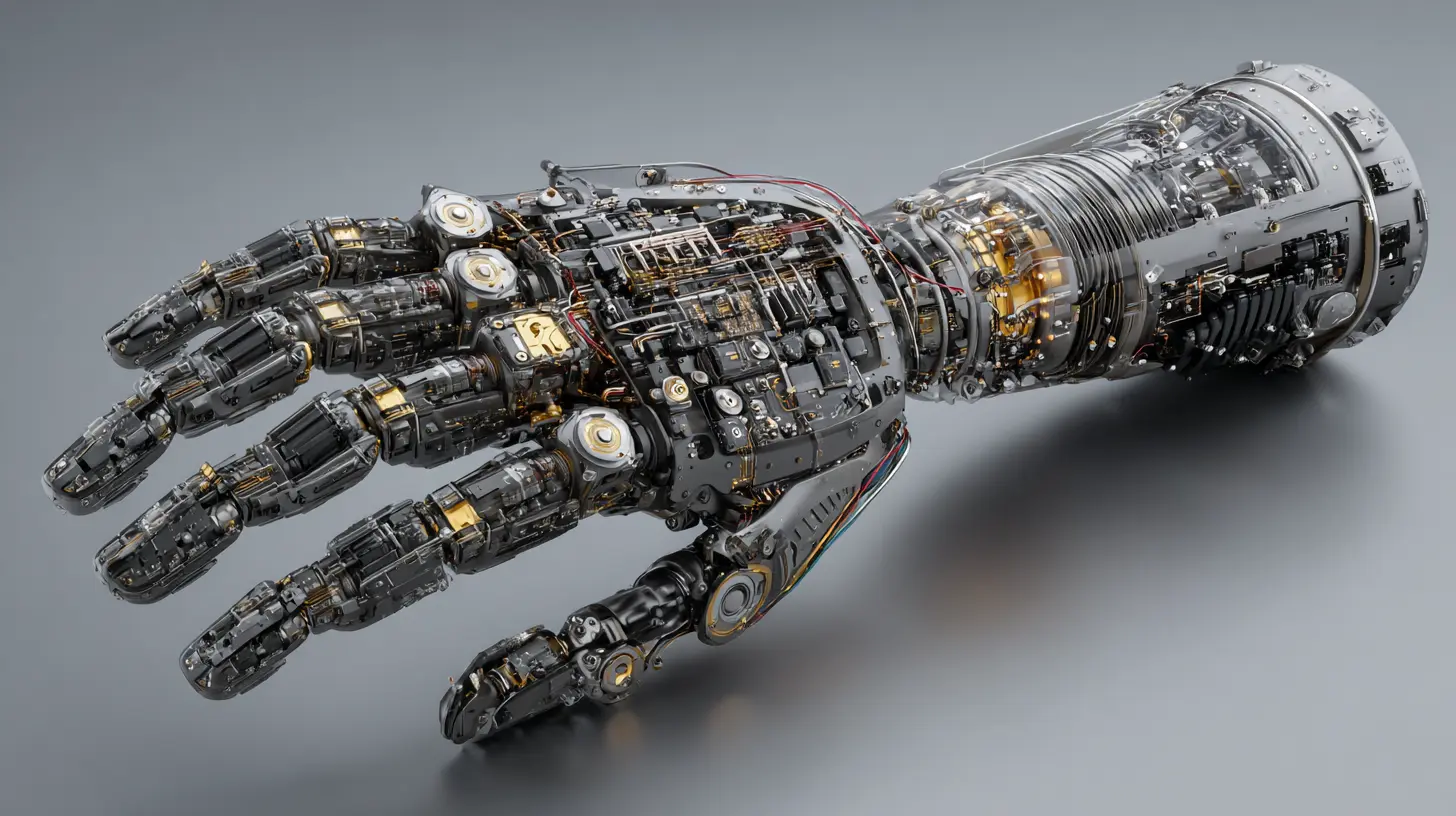

Let’s start with the basics. Autonomous weapons — sometimes called “killer robots” (ominous, we know) — are weapons systems that can select and engage targets without human intervention. That’s right — they can literally fire or not fire based on their own programming or AI algorithms.They’re not science fiction anymore. Think drones without pilots, tanks that don’t need drivers, and missiles that pick their own targets.

Now, to be clear: autonomy exists on a spectrum. Some systems are only semi-autonomous and still report back to a human for final approval. Others? They’re fully capable of "thinking" on their own.

And that’s where things get ethically spicy.

Why Use Autonomous Weapons?

Before we hit the panic button, let’s understand why military powers are investing in them. There are a few reasons, and they actually make a weird kind of sense.1. Speed and Precision

AI-driven systems can react faster than human beings in high-stress environments. In a world where milliseconds matter, that’s a big deal.Think about it: if a rogue missile is heading toward a city, an autonomous defense system might intercept it faster than a human-operated one. Boom! Crisis averted.

2. Reduced Soldier Casualties

With machines doing the dirty work, fewer soldiers have to risk their lives on the battlefield. That’s a big plus for governments and families alike.3. Cost-Effectiveness

Robots don’t need to eat, sleep, or get paid. Once built and programmed, they could be cheaper in the long run than maintaining large human forces.So yeah, there are benefits… but let’s not ignore the elephant in the war room.

The Ethical Quagmire: Where Do We Draw the Line?

Ah, ethics — the part where things get murky. Sure, autonomous weapons sound efficient, but who’s responsible when something goes wrong? Can we trust AI with moral decisions when it doesn’t even have morals?Let’s break down a few of the biggest dilemmas.

1. The Accountability Question

When a robot kills someone by mistake, who’s to blame?- The programmer?

- The company that made it?

- The military officer who deployed it?

There’s no easy answer. You can’t exactly throw a robot in jail, right?

This lack of accountability is terrifying. At least with human soldiers, we can investigate misconduct. But with AI? The code doesn't care.

2. Morality Doesn’t Compute

AI lacks empathy, compassion, and moral reasoning. It can calculate probabilities, but it can't ask, "Is this right?"What if the AI misidentifies a civilian as a combatant? Or decides that collateral damage is acceptable in pursuit of a higher “statistical success rate”?

Once morality is reduced to math, innocent lives can become acceptable losses — and that’s not okay.

3. Risk of Misuse

Let’s be honest — not every government or group will use these tools ethically. Autonomous weapons could fall into the hands of terrorists, rogue states, or even get hacked.Imagine a drone swarm turned against its creators because someone found a loophole in the code. That’s nightmare fuel.

4. Lowering the Barrier to War

One of the most chilling ideas? Autonomous weapons might make going to war too easy. If you don’t risk your own troops, you might be more tempted to start conflicts.It’s like playing a video game with no consequences — but in real life. And that’s seriously dangerous.

International Response: Are We Doing Anything About It?

Believe it or not, people are paying attention. Activists, scientists, and even some governments are calling for bans or at least strict regulations on fully autonomous weapons.The Campaign to Stop Killer Robots (yes, that’s a real thing) is pushing for international treaties to prevent the development and use of lethal autonomous weapons.

The United Nations has also held talks… but let’s be real, progress has been sloooow. Everyone agrees it’s a problem. No one agrees on how to solve it.

Why? Because powerful nations don’t want restrictions. If your rivals are building autonomous weapons, do you really want to show up to the battlefield with an outdated playbook?

Technological Bias: Yep, Even AI Has Prejudices

Another curveball? AI systems often inherit biases from their training data. If an autonomous weapon is trained on flawed data (and most are), it may disproportionately target certain groups.Imagine facial recognition tech that struggles with accuracy for certain ethnicities, now built into a weapon system. That’s not just unfair — it’s deadly.

Ethical AI isn’t just about preventing Terminators. It’s about making sure the tech doesn't reinforce existing inequalities in the most lethal way possible.

The Human Element: Why We Still Matter

Let’s talk about humans for a sec — you know, the squishy, emotional beings who create, use, and get affected by this tech.No matter how advanced AI gets, there’s one thing it can’t replicate: human judgment. Real-world decisions in warfare often require context, empathy, and a deep understanding of nuance.

An autonomous weapon might be great at following rules, but rules can't cover every situation. For instance:

- Should a drone strike a target if there's a high chance of civilian casualties?

- Should a robot delay action because a child is in the area?

These aren’t ones and zeroes. They’re messy, human decisions.

Can We Make Ethical Autonomous Weapons?

Short answer? Maybe.Long answer? It depends on how we design them, deploy them, and — most importantly — regulate them.

There are efforts to create "meaningful human control" systems, where AI makes recommendations but a human still has the final say.

That might be a good middle ground — using AI for speed and analysis without giving up moral oversight.

Still, the risk is real: once the tech exists, the temptation to use it fully autonomously might be hard to resist.

What Can (and Should) Be Done?

So what do we do with all this information? Here’s a little wish list that might make the future a little less dystopian:1. Global Regulations

We need binding international treaties that set clear red lines. Ban fully autonomous lethal weapons. Period.2. Transparency from Tech Companies

Companies building these systems must be open about how they work and include strict ethical guidelines.No more "black box" algorithms deciding who lives and who dies.

3. Public Awareness and Pressure

We, the people, need to stay informed and involved. The more we talk about these issues, the more pressure we can put on our leaders to act responsibly.4. Ethical AI Research

Let’s funnel more funding into AI that’s explainable, fair, and accountable. If we’re going to use autonomous tech, let’s at least make sure it follows high ethical standards.The Bottom Line

Autonomous weapons bring a whole lot of "wow" and an equal dose of "wait, what?" — they’re powerful, efficient, and full of promise. But they’re also riddled with ethical potholes that could shake the foundations of modern warfare and society.At the end of the day, the real question isn’t about what technology can do. It’s about what we want it to do — and where we’re willing to draw the line.

So, do we let the machines take over, or do we stay in the driver’s seat?

The future's not written yet, and that gives us the power to shape it — wisely.

all images in this post were generated using AI tools

Category:

Ai EthicsAuthor:

Marcus Gray